How Passes Moderates Content: AI Tools, Human Review, and Creator Transparency

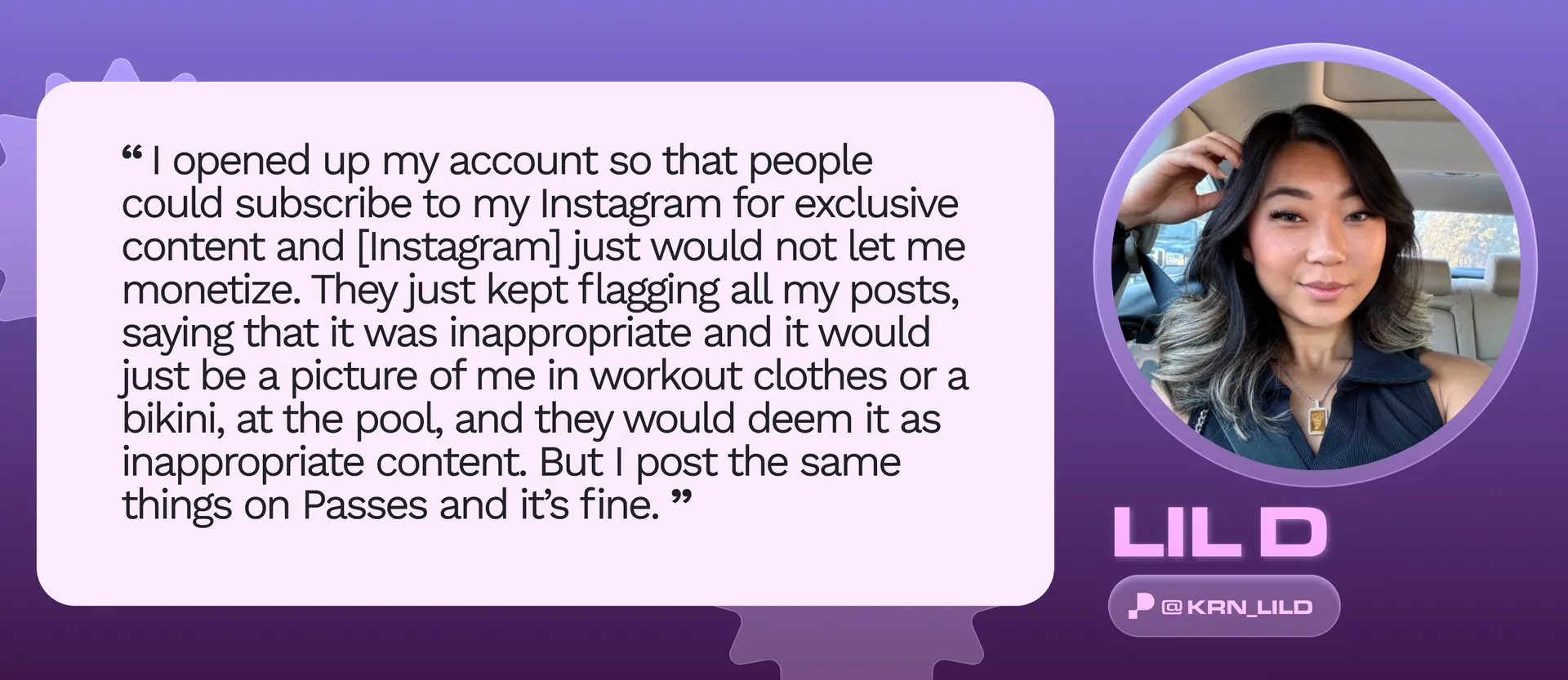

In our final Trust & Safety Creator Series post, we examine Passes’ comprehensive content moderation approach while sharing creator perspectives on how these safeguards impact their platform experience.

At Passes, content moderation is not just a policy. It is a commitment to every creator and fan on the platform. We carefully balance the enforcement of our content standards with creator empowerment, so that the rules are clear, the process is transparent, and creators always know where they stand.

This is the final post in our Trust and Safety Creator Series. Here is a full breakdown of how content moderation works on Passes and what it means for you as a creator.

Passes Content Moderation Policy

Passes has strict policies prohibiting explicit content on the platform. Our content moderation process is proactive, meaning we scan content at the point of upload rather than waiting for reports to come in. This protects all users and maintains the safe, creator-first environment that Passes is built on.

How Passes Moderates Content: A Two-Layer System

Passes uses a two-layer moderation process that combines the speed of AI with the judgment of trained human reviewers.

Layer 1: AI Content Review

When content is uploaded to Passes, it is immediately scanned by three industry-leading AI moderation tools:

- Amazon Rekognition Content Moderation: Detects inappropriate imagery and content at scale

- Hive Moderation: Provides additional AI classification across a wide range of content categories

- Microsoft PhotoDNA: Identifies known harmful imagery and helps prevent its distribution

These tools work together to provide fast, consistent, and comprehensive screening of all content uploaded to the platform.

Layer 2: Human Review by the Trust and Safety Team

Content flagged by the AI layer is then reviewed by members of the Passes Trust and Safety team. Our team reviews flagged content according to Passes' classification standards, verifies the accuracy of the AI determination, and updates the content status if needed.

This two-layer approach ensures that no automated decision goes unchecked by a real person. For a full breakdown of our content statuses and appeals process, read more about it here.

Why Transparency Is Central to How We Moderate

We understand that content moderation can feel frustrating for creators, especially when decisions are made without clear explanation. That is why Passes leads with a transparent policy focus at every step of the process.

What that looks like in practice:

- Our Community Guidelines are written in plain English and organized into clear categories so creators always know what is and is not permitted

- Content statuses clearly communicate the outcome of moderation reviews, including whether content is approved, restricted to DMs and members, or banned

- The Appeals tool in your Vault gives creators a direct path to challenge moderation decisions they believe are incorrect, with a review guaranteed within 24 hours

- We hosted a live webinar dedicated entirely to our content moderation policies, with a full article summary and recording available here

Passes Trust and Safety Creator Series: What We Covered

This post is the final installment in the Passes Trust and Safety Creator Series. Across the series we covered the full picture of how Passes approaches creator and platform safety, including our community guidelines, content statuses, the appeals process, and now content moderation.

We hope this series gave you a clearer, more confident understanding of how Passes works to protect you and your community.

Frequently Asked Questions About Passes Content Moderation

How does Passes moderate content on its platform? Passes uses a two-layer content moderation system. Layer 1 uses three AI tools: Amazon Rekognition Content Moderation, Hive Moderation, and Microsoft PhotoDNA, to scan all content at the point of upload. Layer 2 involves human review by the Passes Trust and Safety team, who verify AI classifications and update content statuses where needed.

What AI tools does Passes use for content moderation? Passes uses three AI moderation tools: Amazon Rekognition Content Moderation, Hive Moderation, and Microsoft PhotoDNA. Together these tools provide fast, comprehensive screening of all content uploaded to the platform before it goes live.

Does Passes allow explicit content? No. Passes has strict policies prohibiting explicit content on the platform. All content is proactively scanned at the point of upload to ensure compliance with these policies, and content found to be in violation is removed.

What happens if my content is flagged on Passes? If your content is flagged during the moderation process, it will be reviewed by the Passes Trust and Safety team. You will be able to see the status of your content in your Vault. If you believe a moderation decision is incorrect, you can submit an appeal directly through the Vault and it will be reviewed within 24 hours.

How do I appeal a content moderation decision on Passes? Go to your Vault in your Passes account, find the content you want to appeal, and click the Appeal button. Appeals are prioritized in the moderation queue and reviewed within 24 hours of submission. Read more about the appeals process here.

Where can I learn more about Passes content policies? You can read the full Passes Community Guidelines for a plain-English overview of what content is and is not permitted on the platform. You can also visit the Passes Help Center for additional resources or to speak with the support team directly.

How does Passes support creators who have concerns about content moderation? Passes leads with transparency at every step of the moderation process. Creators have access to clear content statuses, an appeals tool in their Vault, plain-English community guidelines, and dedicated support through the Creator Success Team and Passes Help Center.

We Are Always Here to Support Your Journey on Passes

Content moderation exists to protect every person on the platform, creators and fans alike. Our goal is never to create friction for creators doing great work. It is to ensure that Passes remains a safe, trusted, and creator-first space for everyone.

If you want to learn more about our trust and safety processes or need help with a specific issue, visit the Passes Help Center, where you can speak with our support team or browse our full library of resources.

Thank you for following along with our Trust and Safety Creator Series. We are always here to support your journey on Passes.

To ensure that creators do not feel this frustration with our content moderation policies, we lead with a transparent policy focus. We also recently hosted a live webinar about our content moderation policies, with an article summary and the recording here.

Conclusion

We hope you enjoyed this Trust & Safety Creator Series! We're always here to support your journey on Passes.

If you want to learn more about our trust and safety processes or need help troubleshooting an issue, check out our Help Center, where you can talk to our support chatbot or read up on our resources.